Why Isn’t Online Age Verification Just Like Showing Your ID In Person?

This blog also appears in our Age Verification Resource Hub: our one-stop shop for users seeking to understand what age-gating laws actually do, what’s at stake, how to protect yourself, and why EFF opposes all forms of age verification mandates. Head to EFF.org/Age to explore our resources and join us in the fight for a free, open, private, and yes—safe—internet.

One of the most common refrains we hear from age verification proponents is that online ID checks are nothing new. After all, you show your ID at bars and liquor stores all the time, right? And it’s true that many places age-restrict access in-person to various goods and services, such as tobacco, alcohol, firearms, lottery tickets, and even tattoos and body piercings.

But this comparison falls apart under scrutiny. There are fundamental differences between flashing your ID to a bartender and uploading government documents or biometric data to websites and third-party verification companies. Online age-gating is more invasive, affects far more people, and poses serious risks to privacy, security, and free speech that simply don't exist when you buy a six-pack at the corner store.

Online age verification burdens many more people.

Online age restrictions are imposed on many, many more users than in-person ID checks. Because of the sheer scale of the internet, regulations affecting online content sweep in an enormous number of adults and youth alike, forcing them to disclose sensitive personal data just to access lawful speech, information, and services.

Additionally, age restrictions in the physical world affect only a limited number of transactions: those involving a narrow set of age-restricted products or services. Typically this entails a bounded interaction about one specific purchase.

Online age verification laws, on the other hand, target a broad range of internet activities and general purpose platforms and services, including social media sites and app stores. And these laws don’t just wall off specific content deemed harmful to minors (like a bookstore would); they age-gate access to websites wholesale. This is akin to requiring ID every time a customer walks into a convenience store, regardless of whether they want to buy candy or alcohol.

There are significant privacy and security risks that don’t exist offline.

In offline, in-person scenarios, a customer typically provides their physical ID to a cashier or clerk directly. Oftentimes, customers need only flash their ID for a quick visual check, and no personal information is uploaded to the internet, transferred to a third-party vendor, or stored. Online age-gating, on the other hand, forces users to upload—not just momentarily display—sensitive personal information to a website in order to gain access to age-restricted content.

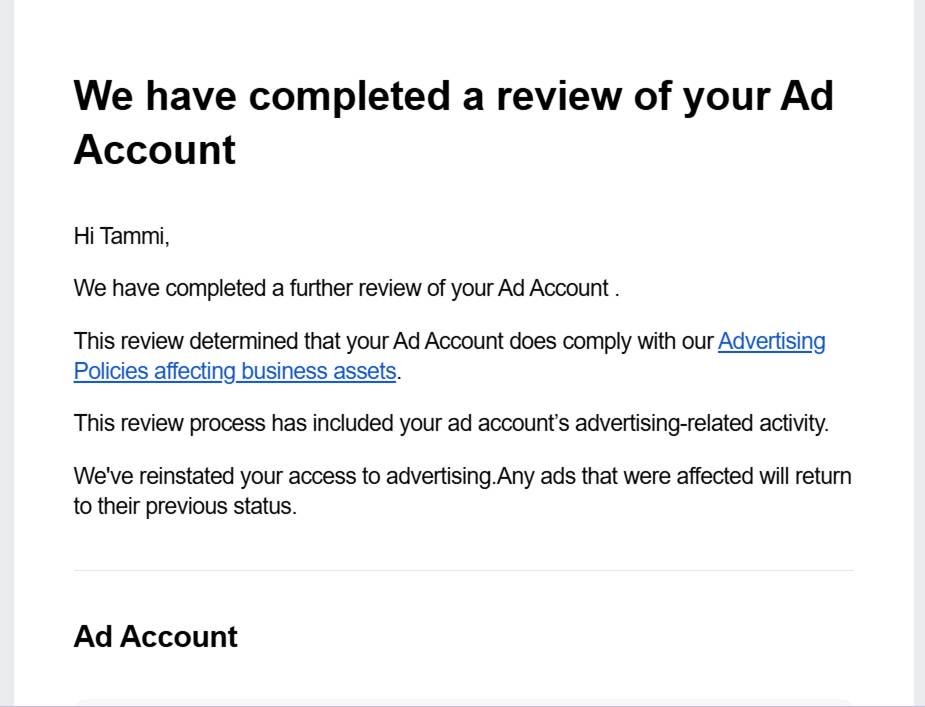

This creates a cascade of privacy and security problems that don’t exist in the physical world. Once sensitive information like a government-issued ID is uploaded to a website or third-party service, there is no guarantee it will be handled securely. You have no direct control over who receives and stores your personal data, where it is sent, or how it may be accessed, used, or leaked outside the immediate verification process.

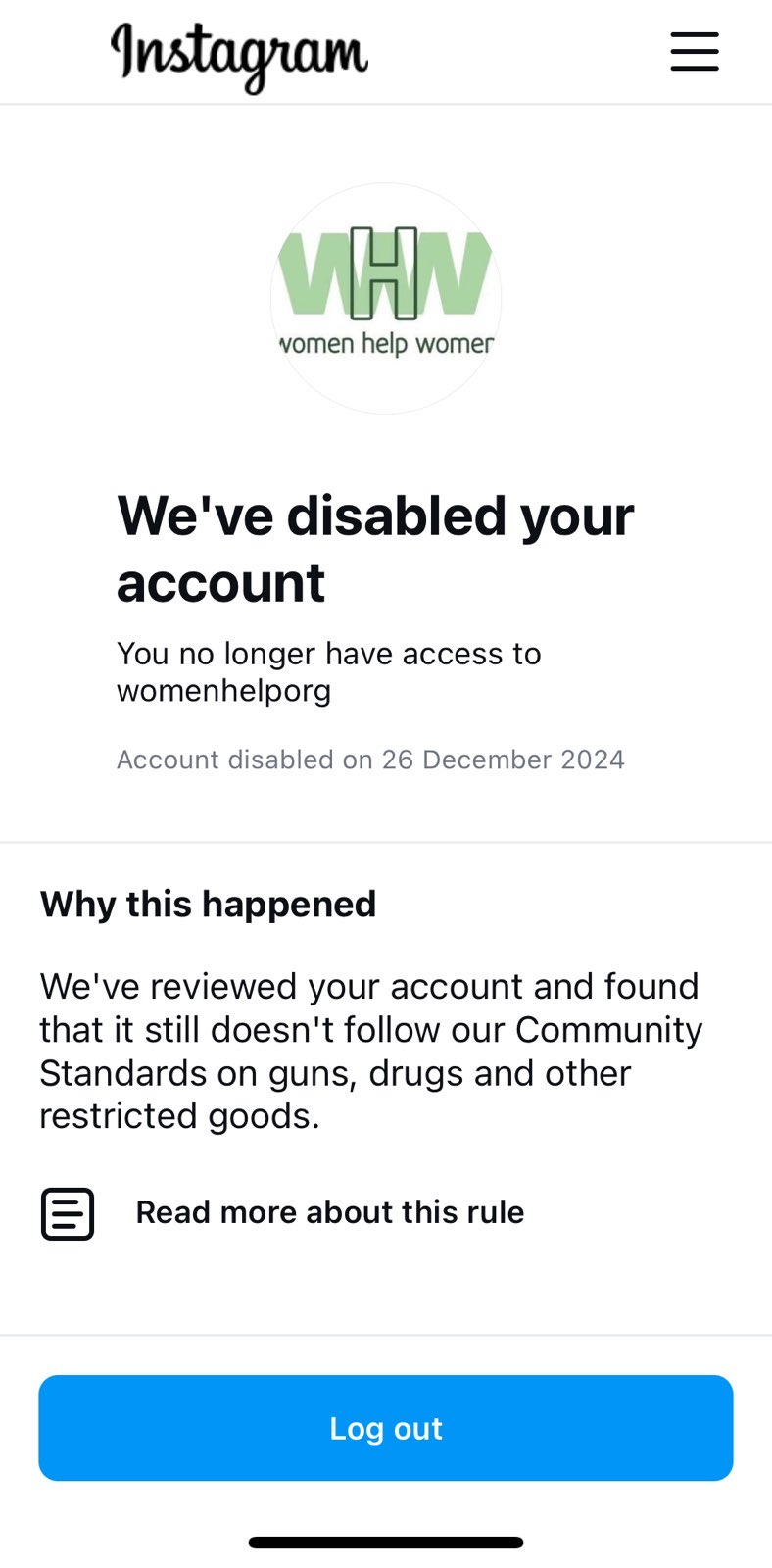

Data submitted online rarely just stays between you and one other party. All online data is transmitted through a host of third-party intermediaries, and almost all websites and services also host a network of dozens of private, third-party trackers managed by data brokers, advertisers, and other companies that are constantly collecting data about your browsing activity. The data is shared with or sold to additional third parties and used to target behavioral advertisements. Age verification tools also often rely on third parties just to complete a transaction: a single instance of ID verification might involve two or three different third-party partners, and age estimation services often work directly with data brokers to offer a complete product. Users’ personal identifying data then circulates among these partners.

All of this increases the likelihood that your data will leak or be misused. Unfortunately, data breaches are an endemic part of modern life, and the sensitive, often immutable, personal data required for age verification is just as susceptible to being breached as any other online data. Age verification companies can be—and already have been—hacked. Once that personal data gets into the wrong hands, victims are vulnerable to targeted attacks both online and off, including fraud and identity theft.

Troublingly, many age verification laws don’t even protect user security by providing a private right of action to sue a company if personal data is breached or misused. This leaves you without a direct remedy should something bad happen.

Some proponents claim that age estimation is a privacy-preserving alternative to ID-based verification. But age estimation tools still require biometric data collection, often demanding users submit a photo or video of their face to access a site. And again, once submitted, there’s no way for you to verify how that data is processed or stored. Requiring face scans also normalizes pervasive biometric surveillance and creates infrastructure that could easily be repurposed for more invasive tracking. Once we’ve accepted that accessing lawful speech requires submitting our faces for scanning, we’ve crossed a threshold that’s difficult to walk back.

Online age verification creates even bigger barriers to access.

Online age gates create more substantial access barriers than in-person ID checks do. For those concerned about privacy and security, there is no online analog to a quick visual check of your physical ID. Users may be justifiably discouraged from accessing age-gated websites if doing so means uploading personal data and creating a potentially lasting record of their visit to that site.

Given these risks, age verification also imposes barriers to remaining anonymous that don't typically exist in-person. Anonymity can be essential for those wishing to access sensitive, personal, or stigmatized content online. And users have a right to anonymity, which is “an aspect of the freedom of speech protected by the First Amendment.” Even if a law requires data deletion, users must still be confident that every website and online service with access to their data will, in fact, delete it—something that is in no way guaranteed.

In-person ID checks are additionally less likely to wrongfully exclude people due to errors. Online systems that rely on facial scans are often incorrect, especially when applied to users near the legal age of adulthood. These tools are also less accurate for people with Black, Asian, Indigenous, and Southeast Asian backgrounds, for users with disabilities, and for transgender individuals. This leads to discriminatory outcomes and exacerbates harm to already marginalized communities. And while in-person shoppers can speak with a store clerk if issues arise, these online systems often rely on AI models, leaving users who are incorrectly flagged as minors with little recourse to challenge the decision.

In-person interactions may also be less burdensome for adults who don’t have up-to-date ID. An older adult who forgets their ID at home or lacks current identification is not likely to face the same difficulty accessing material in a physical store, since there are usually distinguishing physical differences between young adults and those older than 35. A visual check is often enough. This matters, as a significant portion of the U.S. population does not have access to up-to-date government-issued IDs. This disproportionately affects Black Americans, Hispanic Americans, immigrants, and individuals with disabilities, who are less likely to possess the necessary identification.

We’re talking about First Amendment-protected speech.

It's important not to lose sight of what’s at stake here. The good or service age gated by these laws isn’t alcohol or cigarettes—it’s First Amendment-protected speech. Whether the target is social media platforms or any other online forum for expression, age verification blocks access to constitutionally-protected content.

Access to many of these online services is also necessary to participate in the modern economy. While those without ID may function just fine without being able to purchase luxury products like alcohol or tobacco, requiring ID to participate in basic communication technology significantly hinders people’s ability to engage in economic and social life.

This is why it’s wrong to claim online age verification is equivalent to showing ID at a bar or store. This argument handwaves away genuine harms to privacy and security, dismisses barriers to access that will lock millions out of online spaces, and ignores how these systems threaten free expression. Ignoring these threats won’t protect children, but it will compromise our rights and safety.