Trying to take control of your online privacy can feel like a full-time job. But if you break it up into small tasks and take on one project at a time it makes the process of protecting your privacy much easier. This month we’re going to do just that. For the month of October, we’ll update this post with new tips every weekday that show various ways you can opt yourself out of the ways tech giants surveil you.

Online privacy isn’t dead. But the tech giants make it a pain in the butt to achieve. With these incremental tweaks to the services we use, we can throw sand in the gears of the surveillance machine and opt out of the ways tech companies attempt to optimize us into advertisement and content viewing machines. We’re also pushing companies to make more privacy-protective defaults the norm, but until that happens, the onus is on all of us to dig into the settings.

Support EFF!

All month long we’ll share tips, including some with the help from our friends at Consumer Reports’ Security Planner tool. Use the Table of Contents here to jump straight to any tip.

Table of Contents

Tip 1: Establish Good Digital Hygiene

Before we can get into the privacy weeds, we need to first establish strong basics. Namely, two security fundamentals: using strong passwords (a password manager helps simplify this) and two-factor authentication for your online accounts. Together, they can significantly improve your online privacy by making it much harder for your data to fall into the hands of a stranger.

Using unique passwords for every web login means that if your account information ends up in a data breach, it won’t give bad actors an easy way to unlock your other accounts. Since it’s impossible for all of us to remember a unique password for every login we have, most people will want to use a password manager, which generates and stores those passwords for you.

Two-factor authentication is the second lock on those same accounts. In order to login to, say, Facebook for the first time on a particular computer, you’ll need to provide a password and a “second factor,” usually an always-changing numeric code generated in an app or sent to you on another device. This makes it much harder for someone else to get into your account because it’s less likely they’ll have both a password and the temporary code.

This can be a little overwhelming to get started if you’re new to online privacy! Aside from our guides on Surveillance Self-Defense, we recommend taking a look at Consumer Reports’ Security Planner for ways to help you get started setting up your first password manager and turning on two-factor authentication.

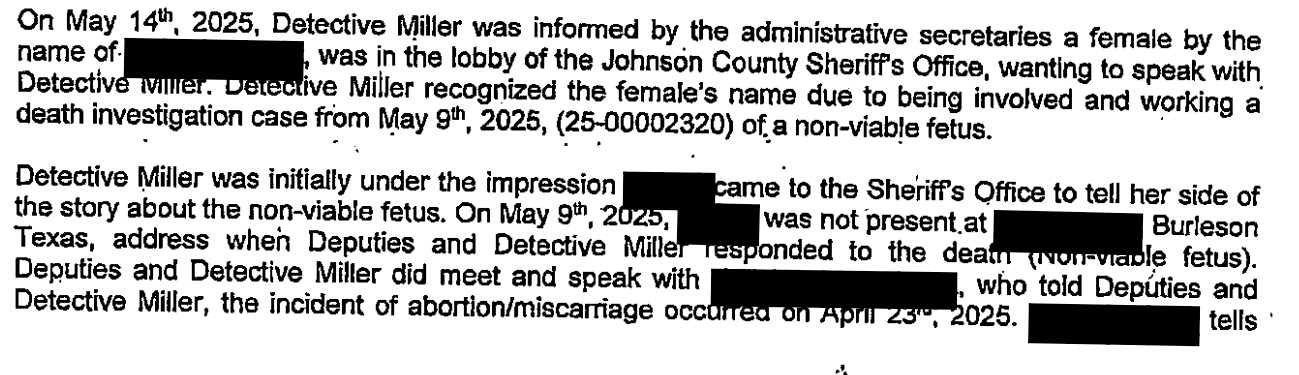

Tip 2: Learn What a Data Broker Knows About You

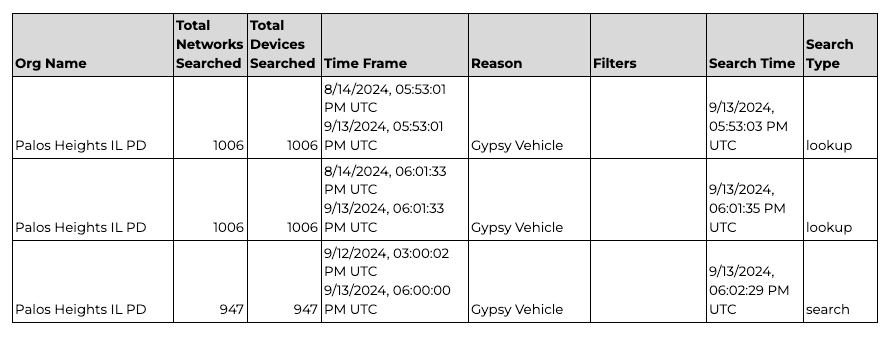

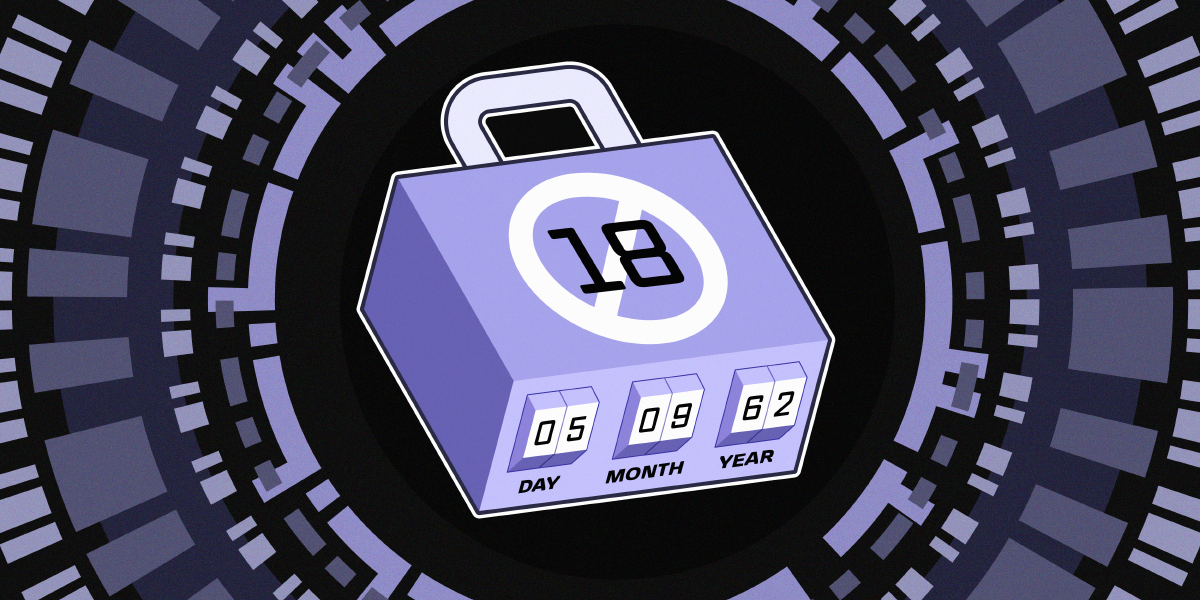

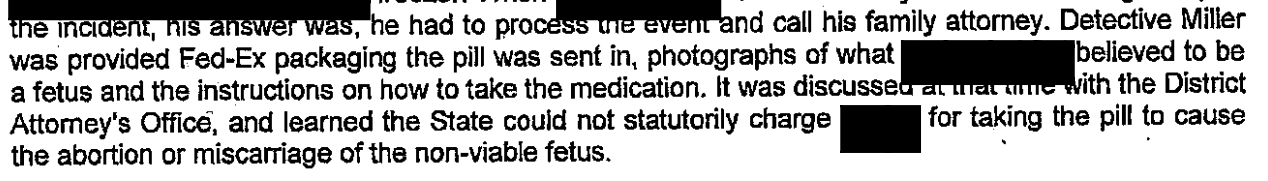

Hundreds of data brokers you’ve never heard of are harvesting and selling your personal information. This can include your address, online activity, financial transactions, relationships, and even your location history. Once sold, your data can be abused by scammers, advertisers, predatory companies, and even law enforcement agencies.

Data brokers build detailed profiles of our lives but try to keep their own practices hidden. Fortunately, several state privacy laws give you the right to see what information these companies have collected about you. You can exercise this right by submitting a data access request to a data broker. Even if you live in a state without privacy legislation, some data brokers will still respond to your request.

There are hundreds of known data brokers, but here are a few major ones to start with:

Data brokers have been caught ignoring privacy laws, so there’s a chance you won’t get a response. If you do, you’ll learn what information the data broker has collected about you and the categories of third parties they’ve sold it to. If the results motivate you to take more privacy action, encourage your friends and family to do the same. Don’t let data brokers keep their spying a secret.

You can also ask data brokers to delete your data, with or without an access request. We’ll get to that later this month and explain how to do this with people-search sites, a category of data brokers.

Tip 3: Disable Ad Tracking on iPhone and Android

Picture this: you’re doomscrolling and spot a t-shirt you love. Later, you mention it to a friend and suddenly see an ad for that exact shirt in another app. The natural question pops into your head: “Is my phone listening to me?” Take a sigh of relief because, no, your phone is not listening to you. But advertisers are using shady tactics to profile your interests. Here’s an easy way to fight back: disable the ad identifier on your phone to make it harder for advertisers and data brokers to track you.

Disable Ad Tracking on iOS and iPadOS:

- Open Settings > Privacy & Security > Tracking, and turn off “Allow Apps to Request to Track.”

- Open Settings > Privacy & Security > Apple Advertising, and disable “Personalized Ads” to also stop some of Apple’s internal tracking for apps like the App Store.

- If you use Safari, go to Settings > Apps > Safari > Advanced and disable “Privacy Preserving Ad Measurement.”

Disable Ad Tracking on Android:

- Open Settings > Security & privacy > Privacy controls > Ads, and tap “Delete advertising ID.”

- While you’re at it, run through Google’s “Privacy Checkup” to review what info other Google services—like YouTube or your location—may be sharing with advertisers and data brokers.

These quick settings changes can help keep bad actors from spying on you. For a deeper dive on securing your iPhone or Android device, be sure to check out our full Surveillance Self-Defense guides.

Tip 4: Declutter Your Apps

Decluttering is all the rage for optimizers and organizers alike, but did you know a cleansing sweep through your apps can also help your privacy? Apps collect a lot of data, often in the background when you are not using them. This can be a prime way companies harvest your information, and then repackage and sell it to other companies you've never heard of. Having a lot of apps increases the peepholes that companies can gain into your personal life.

Do you need three airline apps when you're not even traveling? Or the app for that hotel chain you stayed in once? It's best to delete that app and cut off their access to your information. In an ideal world, app makers would not process any of your data unless strictly necessary to give you what you asked for. Until then, to do an app audit:

- Look through the apps you have and identify ones you rarely open or barely use.

- Long-press on apps that you don't use anymore and delete or uninstall them when a menu pops up.

- Even on apps you keep, take a swing through the location, microphone, or camera permissions for each of them. For iOS devices you can follow these instructions to find that menu. For Android, check out this instructions page.

If you delete an app and later find you need it, you can always redownload it. Try giving some apps the boot today to gain some memory space and some peace of mind.

Support EFF!

Tip 5: Disable Behavioral Ads on Amazon

Happy Amazon Prime Day! Let’s celebrate by taking back a piece of our privacy.

Amazon collects an astounding amount of information about your shopping habits. While the only way to truly free yourself from the company’s all-seeing eye is to never shop there, there is something you can do to disrupt some of that data use: tell Amazon to stop using your data to market more things to you (these settings are for US users and may not be available in all countries).

- Log into your Amazon account, then click “Account & Lists” under your name.

- Scroll down to the “Communication and Content” section and click “Advertising preferences” (or just click this link to head directly there).

- Click the option next to “Do not show me interest-based ads provided by Amazon.”

- You may want to also delete the data Amazon already collected, so click the “Delete ad data” button.

This setting will turn off the personalized ads based on what Amazon infers about you, though you will likely still see recommendations based on your past purchases at Amazon.

Of course, Amazon sells a lot of other products. If you own an Alexa, now’s a good time to review the few remaining privacy options available to you after the company took away the ability to disable voice recordings. Kindle users might want to turn off some of the data usage tracking. And if you own a Ring camera, consider enabling end-to-end encryption to ensure you’re in control of the recording, not the company.

Tip 6: Install Privacy Badger to Block Online Trackers

Every time you browse the web, you’re being tracked. Most websites contain invisible tracking code that lets companies collect and profit from your data. That data can end up in the hands of advertisers, data brokers, scammers, and even government agencies. Privacy Badger, EFF’s free browser extension, can help you fight back.

Privacy Badger automatically blocks hidden trackers to stop companies from spying on you online. It also tells websites not to share or sell your data by sending the “Global Privacy Control” signal, which is legally binding under some state privacy laws. Privacy Badger has evolved over the past decade to fight various methods of online tracking. Whether you want to protect your sensitive information from data brokers or just don’t want Big Tech monetizing your data, Privacy Badger has your back.

Visit privacybadger.org to install Privacy Badger.

It’s available on Chrome, Firefox, Edge, and Opera for desktop devices and Firefox and Edge for Android devices. Once installed, all of Privacy Badger’s features work automatically. There’s no setup required! If blocking harmful trackers ends up breaking something on a website, you can easily turn off Privacy Badger for that site while maintaining privacy protections everywhere else.

When you install Privacy Badger, you’re not just protecting yourself—you’re joining EFF and millions of other users in the fight against online surveillance.

Tip 7: Review Location Tracking Settings

Data brokers don’t just collect information on your purchases and browsing history. Mobile apps that have the location permission turned on will deliver your coordinates to third parties in exchange for insights or monetary kickbacks. Even when they don’t deliver that data directly to data brokers, if the app serves ad space, your location will be delivered in real-time bid requests not only to those wishing to place an ad, but to all participants in the ad auction—even if they lose the bid. Location data brokers take part in these auctions just to harvest location data en masse, without any intention of buying ad space.

Luckily, you can change a few settings to protect yourself against this hoovering of your whereabouts. You can use iOS or Android tools to audit an app’s permissions, providing clarity on who is providing what info to whom. You can then go to the apps that don’t need your location data and disable their access to that data (you can always change your mind later if it turns out location access was useful). You can also disable real-time location tracking by putting your phone into airplane mode, while still being able to navigate using offline maps. And by disabling mobile advertising identifiers (see tip three), you break the chain that links your location from one moment to the next.

Finally, for particularly sensitive situations you may want to bring an entirely separate, single-purpose device which you’ve kept clean of unneeded apps and locked down settings on. Similar in concept to a burner phone, even if this single-purpose device does manage to gather data on you, it can only tell a partial story about you—all the other data linking you to your normal activities will be kept separate.

For details on how you can follow these tips and more on your own devices, check out our more extensive post on the topic.

Tip 8: Limit the Data Your Gaming Console Collects About You

Oh, the beauty of gaming consoles—just plug in and play! Well... after you speed-run through a bunch of terms and conditions, internet setup, and privacy settings. If you rushed through those startup screens, don’t worry! It’s not too late to limit the data your console is collecting about you. Because yes, modern consoles do collect a lot about your gaming habits.

Start with the basics: make sure you have two-factor authentication turned on for your accounts. PlayStation, Xbox, and Nintendo all have guides on their sites. Between payment details and other personal info tied to these accounts, 2FA is an easy first line of defense for your data.

Then, it’s time to check the privacy controls on your console:

- PlayStation 5: Go to Settings > Users and Accounts > Privacy to adjust what you share with both strangers and friends. To limit the data your PS5 collects about you, go to Settings > Users and Accounts > Privacy, where you can adjust settings under Data You Provide and Personalization.

- Xbox Series X|S: Press the Xbox button > Profile & System > Settings > Account > Privacy & online safety > Xbox Privacy to fine-tune your sharing. To manage data collection, head to Profile & System > Settings > Account > Privacy & online safety > Data collection.

- Nintendo Switch: The Switch doesn’t share as much data by default, but you still have options. To control who sees your play activity, go to System Settings > Users > [your profile] > Play Activity Settings. To opt out of sharing eShop data, open the eShop, select your profile (top right), then go to Google Analytics Preferences > Do Not Share.

Plug and play, right? Almost. These quick checks can help keep your gaming sessions fun—and more private.

Tip 9: Hide Your Start and End Points on Strava

Sharing your personal fitness goals, whether it be extended distances, accurate calorie counts, or GPS paths—sounds like a fun, competitive feature offered by today's digital fitness trackers. If you enjoy tracking those activities, you've probably heard of Strava. While it's excellent for motivation and connecting with fellow athletes, Strava's default settings can reveal sensitive information about where you live, work, or exercise, creating serious security and privacy risks. Fortunately, Strava gives you control over how much of your activity map is visible to others, allowing you to stay active in your community while protecting your personal safety.

We've covered how Strava data exposed classified military bases in 2018 when service members used fitness trackers. If fitness data can compromise national security, what's it revealing about you?

Here's how to hide your start and end points:

- On the website: Hover over your profile picture > Settings > Privacy Controls > Map Visibility.

- On mobile: Open Settings > Privacy Controls > Map Visibility.

- You can then choose from three options: hide portions near a specific address, hide start/end of all activities, or hide entire maps

You can also adjust individual activities:

- Open the activity you want to edit.

- Select the three-dot menu icon.

- Choose "Edit Map Visibility."

- Use sliders to customize what's hidden or enable "Hide the Entire Map."

Great job taking control of your location privacy! Remember that these settings only apply to Strava, so if you share activities to other platforms, you'll need to adjust those privacy settings separately. While you're at it, consider reviewing your overall activity visibility settings to ensure you're only sharing what you want with the people you choose.

Tip 10: Find and Delete An Account You No Longer Use

Millions of online accounts are compromised each year. The more accounts you have, the more at risk you are of having your personal data illegally accessed and published online. Even if you don’t suffer a data breach, there’s also the possibility that someone could find one of your abandoned social media accounts containing information you shared publicly on purpose in the past, but don’t necessarily want floating around anymore. And companies may still be profiting off details of your personal life, even though you’re not getting any benefit from their service.

So, now’s a good time to find an old account to delete. There may be one you can already think of, but if you’re stuck, you can look through your password manager, look through logins saved on your web browser, or search your email inbox for phrases like “new account,” “password,” “welcome to,” or “confirm your email.” Or, enter your email address on the website HaveIBeenPwned to get a list of sites where your personal information has been compromised to see if any of them are accounts you no longer use.

Once you’ve decided on an account, you’ll need to find the steps to delete it. Simply deleting an app off of your phone or computer does not delete your account. Often you can log in and look in the account settings, or find instructions in the help menu, the FAQ page, or the pop-up customer service chat. If that fails, use a search engine to see if anybody else has written up the steps to deleting your specific type of account.

For more information, check out the Delete Unused Accounts tip on Security Planner.

Support EFF!

Tip 11: Search for Yourself

Today's tip may sound a little existential, but we're not suggesting a deep spiritual journey. Just a trip to your nearest search engine. Pop your name into search engines such as Google or DuckDuckGo, or even AI tools such as ChatGPT, to see what you find. This is one of the simplest things you can do to raise your own awareness of your digital reputation. It can be the first thing prospective employers (or future first dates) do when trying to figure out who you are. From a privacy perspective, doing it yourself can also shed light on how your information is presented to the general public. If there's a defunct social media account you'd rather keep hidden, but it's on the first page of your search results, that might be a good signal for you to finally delete that account. If you shared your cellphone number with an organization you volunteer for and it's on their home page, you can ask them to take it down.

Knowledge is power. It's important to know what search results are out there about you, so you understand what people see when they look for you. Once you have this overview, you can make better choices about your online privacy.

Tip 12: Tell “People Search” Sites to Delete Your Information

When you search online for someone’s name, you’ll likely see results from people-search sites selling their home address, phone number, relatives’ names, and more. People-search sites are a type of data broker with an especially dangerous impact. They can expose people to scams, stalking, and identity theft. Submit opt out requests to these sites to reduce the amount of personal information that is easily available about you online.

Check out this list of opt-out links and instructions for more than 50 people search sites, organized by priority. Before submitting a request, check that the site actually has your information. Here are a few high-priority sites to start with:

Data brokers continuously collect new information, so your data could reappear after being deleted. You’ll have to re-submit opt-outs periodically to keep your information off of people-search sites. Subscription-based services can automate this process and save you time, but a Consumer Reports study found that manual opt-outs are more effective.

Tip 13: Remove Your Personal Addresses from Search Engines

Your home address may often be found with just a few clicks online. Whether you're concerned about your digital footprint or looking to safeguard your physical privacy, understanding where your address appears and how to remove or obscure it is a crucial step. Here's what you need to know.

Your personal addresses can be available through public records like property purchases, medical licensing information, or data brokers. Opting out from data brokers will do a lot to remove what's available commercially, but sometimes you can't erase the information entirely from things like property sales records.

You can ask some search engines to remove your personal information from search indexes, which is the most efficient way to make information like your personal addresses, phone number, and email address a lot harder to find. Google has a form that makes this request quite easy, and we’d suggest starting there.

Day 14: Check Out Signal

Here's the problem: many of your texts aren't actually private. Phone companies, government agencies, and app developers all too often can all peek at your conversations.

So on Global Encryption Day, our tip is to check out Signal—a messaging app that actually keeps your conversations private.

Signal uses end-to-end encryption, meaning only you and your recipient can read your messages—not even Signal can see them. Security experts love Signal because it's run by a privacy-focused nonprofit, funded by donations instead of data collection, and its code is publicly auditable.

Beyond privacy, Signal offers free messaging and calls over Wi-Fi, helping you avoid SMS charges and international calling fees. The only catch? Your contacts need Signal too, so start recruiting your friends and family!

How to get started: Download Signal from your app store, verify your phone number, set a secure PIN, and start messaging your contacts who join you. Consider also setting up a username so people can reach you without sharing your phone number. For more detailed instructions, check out our guide.

Global Encryption Day is the perfect timing to protect your communications. Take your time to explore the app, and check out other privacy protecting features like disappearing messages, session verification, and lock screen notification privacy.

Tip 15: Switch to a Privacy-Protective Browser

Your browser stores tons of personal information: browsing history, tracking cookies, and data that companies use to build detailed profiles for targeted advertising. The browser you choose makes a huge difference in how much of this tracking you can prevent.

Most people use Chrome or Safari, which are automatically installed on Google and Apple products, but these browsers have significant privacy drawbacks. For example: Chrome's Incognito mode only hides history on your device—it doesn't stop tracking. Safari has been caught storing deleted browser history and collecting data even in private browsing mode.

Firefox is one alternative that puts privacy first. Unlike Chrome, Firefox automatically blocks trackers and ads in Private Browsing mode and prevents websites from sharing your data between sites. It also warns you when websites try to extract your personal information. But Firefox isn't your only option—other privacy-focused browsers like DuckDuckGo, Brave, and Tor also offer strong protections with different features. The key is switching away from browsers that prioritize data collection over your privacy.

Switching is easy: download your chosen browser from the links above and install it. Most browsers let you import bookmarks and passwords during setup.

You now have a new browser! Take some time to explore your new browser's privacy settings to maximize your protection.

Tip 16: Give Yourself Another Online Identity

We all take on different identities at times. Just as it's important to set boundaries in your daily life, the same can be true for your digital identity. For many reasons, people may want to keep aspects of their lives separate—and giving people control over how their information is used is one of the fundamental reasons we fight for privacy. Consider chopping up pieces of your life over separate email accounts, phone numbers, or social media accounts.

This can help you manage your life and keep a full picture of your private information out of the hands of nosy data-mining companies. Maybe you volunteer for an organization in your spare time that you'd rather keep private, want to keep emails from your kids' school separate from a mountain of spam, or simply would rather keep your professional and private social media accounts separate.

Whatever the reason, consider whether there's a piece of your life that could benefit from its own identity. When you set up these boundaries, you can also protect your privacy.

Tip 17: Check Out Virtual Card Services

Ever encounter an online vendor selling something that’s just what you need—if you could only be sure they aren’t skimming your credit card number? Or maybe you trust the vendor, but aren’t sure the web site (seemingly written in some arcane e-commerce platform from 1998) won’t be hacked within the hour after your purchase? Buying those bits and bobs shouldn’t cost you your peace of mind on top of the dollar amount. For these types of purchases, we recommend checking out a virtual card service.

These services will generate a seemingly random credit card for your use which is locked down in a particular way which you can specify. This may mean a card locked to a single vendor, where no one else can make charges on it. It could only validate charges for a specific category of purchase, for example clothing. You can not only set limits on vendors, but set spending limits a card can’t exceed, or that it should just be a one-time use card and then close itself. You can even pause a card if you are sure you won’t be using it for some time, and then unpause it later. The configuration is up to you.

There are a number of virtual card services available, like Privacy.com or IronVest, just to name a few. Just like any vendor, though, these services need some way to charge you. So for any virtual card service, pop them into your favored search engine to verify they’re legit, and aren’t going to burden you with additional fees. Some options may also only be available in specific countries or regions, due to financial regulation laws.

Support EFF!

Tip 18: Minimize Risk While Using Digital Payment Apps

Digital payment apps like Cash App, Venmo, and Zelle generally offer fewer fraud protections than credit cards offered by traditional financial institutions. It’s safer to stick to credit cards when making online purchases. That said, there are ways to minimize your risk.

Turn on transaction alerts:

- On Cash App, tap on your picture or initials on the right side of the app. Tap Notifications, and then Transactions. From there, you can toggle the settings to receive a push notification, a text, and/or an email with receipts or to track activity on the app.

- On PayPal, tap on the top right icon to access your account. Tap Notification Preferences, click on “Open Settings” and toggle to “Allow Notifications” if you’d like to see those on your phone.

- On Venmo, tap on your picture or initials to go to the Me tab. Then, tap the Settings gear in the top right of the app, and tap Notifications. From there, you can adjust your text and email notifications, and even turn on push notifications.

Report suspected fraud quickly

If you receive a notification for a purchase you didn’t make, even if it’s a small amount, make sure to immediately report it. Scammers sometimes test the waters with small amounts to see whether or not their targets are paying attention. Additionally, you may be on the hook for part of the payment if you don’t act fast. PayPal and Venmo say they cover lost funds if they’re reported within 60 days, but Cash App has more complicated restrictions, which can include fees of up to $500 if you lose your device or password and don’t report it within 48 hours.

And don’t forget to turn on multifactor authentication for each app. For more information, check out these tips from Consumer Reports.

Tip 19: Turn Off Ad Personalization to Limit How the Tech Giants Monetize Your Data

Tech companies make billions by harvesting your personal data and using it to sell hyper-targeted ads. This business model drives them to track us far beyond their own platforms, gathering data about our online and offline activity. Surveillance-based advertising isn’t just creepy—it’s harmful. The systems that power hyper-targeted ads can also funnel your personal information to data brokers, advertisers, scammers, and law enforcement agencies.

To limit how companies monetize your data through surveillance-based advertising, turn off ad personalization on your accounts. This setting looks different depending on the platform, but here are some key places to start:

Banning online behavioral ads would be a better solution, but turning off ad personalization is a quick and easy step to limit how tech companies profit from your data. And don’t forget to change this same setting on Amazon, too.

Tip 20: Tighten Account Privacy Settings

Just because you want to share information with select friends and family on social media doesn’t necessarily mean you want to broadcast everything to the entire world. Whether you want to make sure you’re not sharing your real-time location with someone you’d rather not bump into or only want your close friends to know about your favorite pop star, you can typically restrict how companies display your status updates and other information.

In addition to whether data is displayed publicly or just to a select group of contacts, you may have some control over how data is collected, used, and shared with advertisers, or how long it is stored for.

To get started, choose an account and review the privacy settings, making changes as needed. Here are links to a few of the major companies to get you started:

Unfortunately, you may need to tweak your privacy settings multiple times to get them the way you want, as companies often introduce new features that are less private by default. And while companies sometimes offer choices on how data is collected, you can’t control most of the data collection that takes place. For more information, see Security Planner.

Tip 21: Protect Your Data When Dating Online

Dating apps like Grindr and Tinder collect vast amounts of intimate details—everything from sexual preferences, location history, and behavioral patterns—all from people that are just looking for love and connection. This data falling into the wrong hands can come with unacceptable consequences, especially for members of the LGBTQ+ community and other vulnerable users that pertinently need privacy protections.

To ensuring that finding love does not involve such a privacy impinging tradeoff, follow these tips to protecting yourself when online dating:

- Review your login information and make sure to use a strong, unique password for your accounts; and enable two-factor authentication when offered.

- Disable behavioral ads so personal details about you cannot be used to create a comprehensive portrait of your life—including your sexual orientation.

- Review your access to your location and camera roll, and possibly change these in line with what information you would like to keep private.

- Consider what photos you choose, upload, and share; and assume that everything can and will be made public.

- Disable the integration of third-party apps like Spotify if you want more privacy.

- Be mindful of what you share with others when you first chat, such as not disclosing financial details, and trust your gut if something feels off.

There isn't one singular way to use dating apps, but taking these small steps can have a big impact in staying safe when dating online.

Tip 22: Turn Off Automatic Content Recognition (ACR) On Your TV

You might think TVs are just meant to be watched, but it turns out TV manufacturers do their fair share of watching what you watch, too. This is done through technology called “automatic content recognition” (ACR), which snoops on and identifies what you’re watching by snapping screenshots and comparing them to a big database. How many screenshots? The Markup found some TVs captured up to 7,200 images per hour. The main reason? Ad targeting, of course.

Any TV that’s connected to the internet likely does this alongside now-standard snooping practices, like tracking what apps you open and where you’re located. ACR is particularly nefarious, though, as it can identify not just streaming services, but also offline content, like video games, over-the-air broadcasts, and physical media. What we watch can and should be private, but that’s especially true when we’re using media that’s otherwise not connected to the internet, like Blu-Rays or DVDs.

Opting out of ACR can be a bit of a chore, but it is possible on most smart TVs. Consumer Reports has guides for most of the major TV manufacturers.

And that’s it for Opt Out October. Hopefully you’ve come across a tip or two that you didn’t know about, and found ways to protect your privacy, and disrupt the astounding amount of data collection tech companies do.